Competitive intelligence has a reputation problem.

Everyone agrees it’s important.

Very few teams can explain how it really informs real decisions.

Somewhere between scattered deal notes, stale competitor decks, and strong opinions, signal gets lost.

What’s missing isn’t effort. It’s structure.

We’ll break down what a good competitive intelligence framework looks like, how to build one that holds up under pressure, and how to turn raw signals into strategy leaders can trust.

Key Notes

- A competitive intelligence framework is a repeatable system (not a one-off competitor analysis).

- Effective CI follows a Define → Gather → Analyze → Implement loop tied to revenue decisions.

- CI only delivers value when embedded into forecasting, planning, pricing, and deal strategy.

Quick Refresher: What competitive intelligence is & what it isn’t

Competitive intelligence (CI) is a systematic, ongoing process of gathering, analyzing, and interpreting information about competitors, market trends, and industry dynamics to support business decisions.

The key word is ongoing.

CI is not:

- A one-off competitor teardown.

- A market research report focused mostly on customer needs.

- A few slides of SWOT that never get revisited.

- An SEO competitive analysis that only looks at keywords and backlinks.

CI overlaps with competitor analysis, but it is broader and continuous.

If the output does not change a decision, you did not do CI. You gathered trivia.

The competitive intelligence framework

A competitive intelligence framework is the repeatable process and structure that makes CI run.

The simplest useful version is the Define → Gather → Analyze → Implement loop.

1) Define

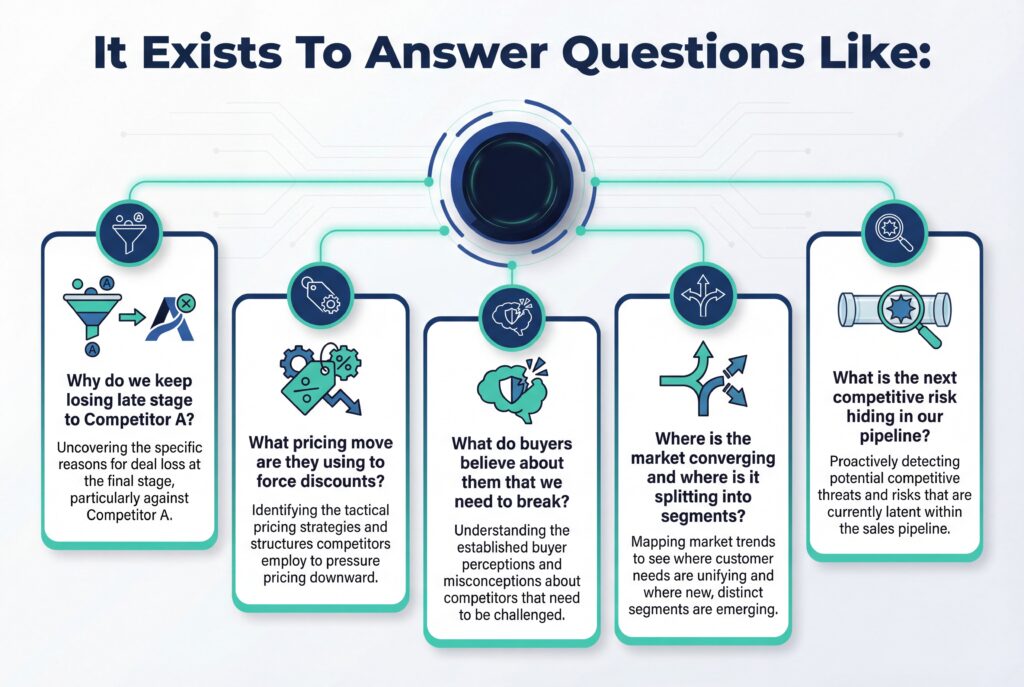

What decisions does CI need to change?

This is where teams mess up because they start with track our competitors. That is not a goal.

Define means:

- The business objective (like win rate, pricing discipline, product differentiation, risk reduction)

- The questions you need answered

- The competitors that matter

- The cadence and the confidence standard

2) Gather

Collect signals from internal and external sources.

Not everything.

Just the sources that can answer your questions with repeatable evidence.

3) Analyze

Turn signals into meaning.

This is where you use frameworks when they help. SWOT, PESTLE, Porter’s Five Forces, scenario planning.

You do not run them because someone expects a slide.

You run them because they create a decision.

4) Implement

Package insights into deliverables people will use.

Then build distribution into existing operating rhythms: forecast calls, QBRs, deal reviews, enablement sessions, roadmap planning.

If CI lives in a folder, it is dead.

Define phase

Define is where you prevent CI from becoming busywork.

Start with intelligence objectives

A strong CI objective is tied to one measurable outcome.

Examples:

- Increase win rate vs Competitor A in Segment X by 10%.

- Reduce discounting pressure in late stage deals by building pricing defenses.

- Detect emerging entrants before they show up in 30% of deals.

- Identify whitespace for product differentiation in a specific buyer workflow.

Now convert that into CI questions.

Competitor tiering

Not every competitor deserves the same attention.

A simple tiering model works:

- Tier 1: Direct competitors you see in deals constantly. Big overlap. Real threat.

- Tier 2: Niche or rising players. Smaller overlap but growing.

- Tier 3: Indirect entrants and substitutes. Not head-to-head today, but could steal share through a wedge.

Tiering should be grounded in:

- Win-loss frequency.

- Overlap in your highest value segments.

- Threat to your GTM motion.

If you are losing to someone weekly, they are Tier 1 even if you do not want them to be.

Cadence & refresh rules

CI dies when it is stale.

Set rules:

- Annual baseline competitor profiles for all Tier 1 and Tier 2 competitors.

- Rolling quarterly updates for Tier 1 competitors.

- Quarterly or semi-annual refresh for the competitive landscape map, depending on how fast your market moves.

High-velocity industries warrant quarterly updates because competitive moves compound fast. You do not need perfection – but you do need freshness plus confidence labels.

Gather phase

Gathering is not collect everything.

It is building a repeatable intake system that produces usable evidence.

Competitive intelligence research methods

CI uses mixed research methods. Two big categories.

Primary research:

- Win-loss interviews

- Customer interviews

- Surveys

- Expert interviews

- Mystery shopping

Primary research is where you get the why.

It explains buyer perception, competitor tactics, and what mattered in the decision.

Secondary research:

- Public filings and financial disclosures

- Press releases and product announcements

- Patents

- Analyst reports

- Websites and documentation

- Social media and events

- Review sites like G2 or Trustpilot

- Job postings and hiring patterns

Secondary research is where you get scale.

It helps you monitor movement and validate primary findings.

Competitor intelligence gathering system

To keep this scalable, group sources by type:

- Public data (press, filings, patents, analyst notes, public decks, public pricing pages)

- Digital signals (website changes, product releases, app store signals, social activity, event agendas)

- People sources (customers, prospects, partners, ex-employees, community chatter)

- Internal data (CRM notes, call recordings, objection tags, win-loss reasons, support tickets)

Internal data is the most underused source. It is also the most relevant to revenue execution. If you have a CRM and a call platform, you already have a competitive dataset. You just do not treat it like one.

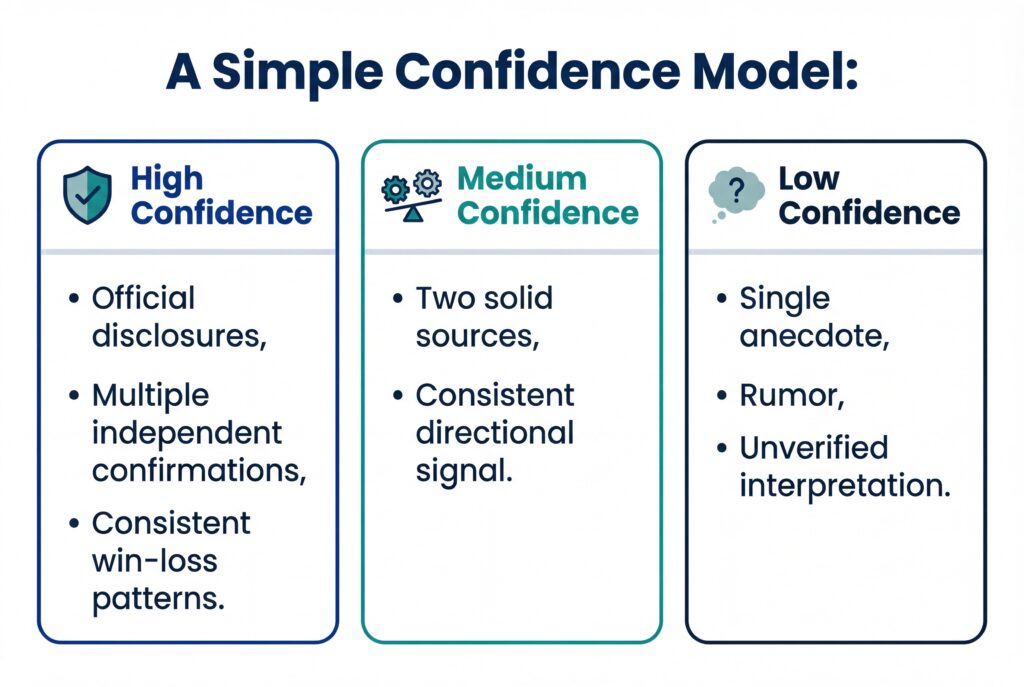

Validation & confidence scoring

CI loses credibility when it gets treated as a story.

So validation is not optional. Best practice is to triangulate across 3+ independent sources before you treat a claim as true.

Every claim should carry a confidence tag. This protects trust.

Ethics & boundaries

Real CI is not espionage.

- Use open sources.

- Respect privacy.

- Respect terms of use.

- No hacking, bribery, deception, or anything that puts your company at risk.

Analyze phase

Analysis is where CI becomes a competitive advantage.

But it’s also where teams waste time…

The difference is whether you choose the right lens for the job.

The three analysis layers

Think of analysis as three distinct layers:

- Competitive assessment framework

- Competitive landscape framework

- Competitive landscape analysis framework

They sound similar. They are not.

Each exists for a different decision.

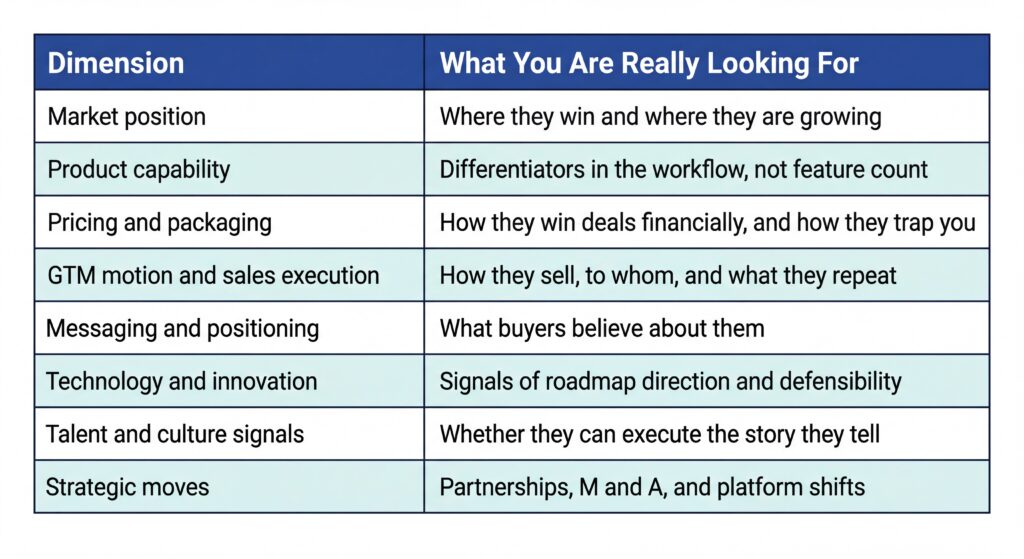

Competitive assessment framework

A competitive assessment framework is how you evaluate specific competitors relative to your business.

It is not a generic checklist. It is a systematic way to compare what matters.

The dimensions that matter

Use a set of dimensions that covers the whole competitive space without overlap:

Scoring without turning it into opinion

Use a consistent scale and weight criteria based on your strategy.

The rule:

If you cannot point to evidence, you do not get to score it.

Evidence can be win-loss patterns, review themes, pricing pages, job postings, product documentation, or sales call transcripts.

When to refresh

Refresh annually at minimum.

High-velocity markets should refresh Tier 1 competitor assessments quarterly.

Competitive landscape framework

A competitive landscape framework maps all relevant players and factors in the market.

This is where you stop thinking about competitors one-by-one and start seeing the structure of the category.

Define scope first

A landscape map is only useful if it has boundaries.

- Segment and buyer profile

- Geography

- Category boundaries

- What counts as a substitute

Skip scope and you get a map that is everyone we have heard of.

Choose axes executives can absorb

Use two axes, sometimes three if bubble size helps.

Examples:

- Market share vs growth

- Price vs product depth

- SMB vs enterprise focus

- Workflow breadth vs specialization

Include direct, indirect & emerging entrants

Disruptors often enter with a wedge. They look irrelevant until they are not.

So your map should include direct competitors, adjacent players, and emerging entrants or substitutes.

Refresh cadence

Quarterly or semi-annual. Fast markets need more frequent refresh.

Competitive landscape analysis framework

Mapping shows you the market; analysis tells you what to do about it.

A competitive landscape analysis framework applies analytical lenses to the mapped information.

Lenses that produce decisions

- SWOT for summarizing strengths and weaknesses, tied to action.

- Porter’s Five Forces for structural industry pressure.

- PESTLE for macro shifts like regulation and technology.

- Scenario planning for volatile categories.

White-space analysis

White space is not what competitors do not do.

It is unmet buyer need plus willingness to pay. Overlay buyer feedback, usage patterns, objections, and loss reasons onto your landscape map.

Look for:

- Jobs consistently underserved

- Hacks and workarounds buyers mention

- Pain no one claims

Saturation signals

Saturation looks like pricing compression, feature convergence, declining growth, and review themes complaining that products feel the same.

Convergence vs segmentation

Ask one question: is the market converging or segmenting?

- Convergence pushes you toward proof, packaging, and execution.

- Segmentation pushes you toward focus and sharper positioning.

Pitfalls to avoid

Outdated data, too many metrics, shallow frameworks, and analysis that never connects back to pipeline reality.

Implement phase

Implementation is where CI becomes usable.

This is also where it dies.

Most CI output fails because it is not tied to a workflow.

Translate insights into actions by function

Sales

- Battlecards that match real objections

- Pricing defenses

- Deal plays by stage and persona

RevOps

- CRM field standardization for competitor tracking

- Competitive risk triggers by stage

- Dashboards showing competitive loss reasons and trends

Marketing

- Positioning updates

- Proof libraries and narrative testing

Product

- Gap analysis anchored to buyer jobs

- Roadmap decisions tied to defensible differentiation

Distribution & adoption

CI should show up where decisions happen:

- Forecast calls

- Deal reviews

- QBRs

- Enablement

- Planning

CI should not require go read this doc.

It should show up as a brief in the meeting, a prompt in the workflow, or a recommended counter when a competitor shows up.

Governance & prioritization

A CI loop needs triage.

Set a lightweight governance model:

One owner, one intake path, one output standard, and a recurring triage meeting.

Competitive intelligence templates & deliverables

Templates are where CI turns into a repeatable system.

The goal is not to create more documents, but to create outputs that can be refreshed, compared, and used.

Competitor profile template

Include fields that change decisions:

- Company overview and segments

- Target customers and use cases

- Product scope and core workflows

- Pricing model and packaging notes

- Strengths and vulnerabilities

- Messaging claims and proof

- Recent strategic moves

- Likely intent

- Confidence tags

Cut long history and trivia.

Competitive assessment scorecard template

A scorecard includes competitors as columns, criteria as rows, weights, evidence notes, and confidence ratings.

Competitor battlecard template

A usable battlecard includes:

- Competitor pitch as buyers say it

- Where they win

- Where they are weak

- What they will say about you

- How to respond

- Proof points

- Landmines

- Talk tracks by persona

- Next best actions

Competitive landscape map template

Include scope, axes, player categories, bubble size if relevant, annotations, and versioning.

Executive CI report template

One page:

- Top market shifts

- Top competitor moves

- Pipeline risks

- Opportunities

- Recommendations

- Confidence tags

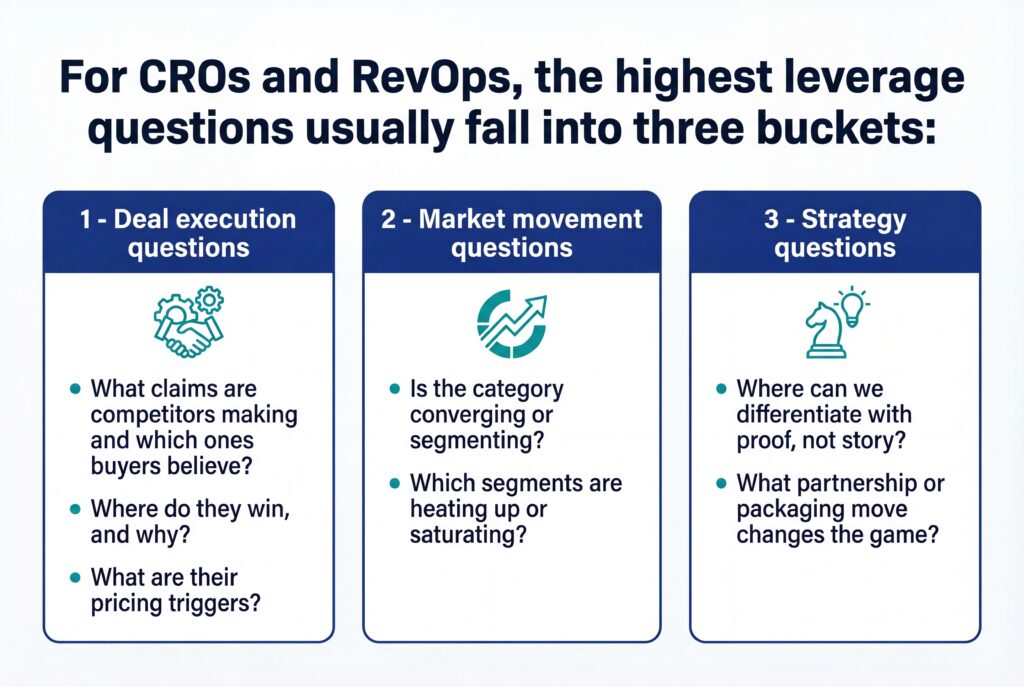

Operating model for a CI program built for CRO and RevOps

Framework plus templates still fail without an operating model.

You need roles, workflow, and measurement.

Roles & responsibilities

A simple model:

- CI owner runs the loop and standards

- Sales logs structured competitor encounters

- RevOps owns CRM structure and reporting

- Enablement turns CI into plays and coaching

- Leadership sponsor makes CI part of operating rhythm

Tooling & automation without drowning

Automate signal collection where it helps, like alerts, review monitoring, pricing page change detection, and job posting tracking.

But keep humans in the loop for validation, interpretation, and translating insight into action.

Metrics that prove CI works

Revenue outcomes

- Win rate vs top competitors

- Cycle time in competitive deals

- Pricing hold rate

- Forecast variance reduction

Operating outcomes

- Battlecard and play adoption

- Time from signal to usable insight

- Reduction in unknown reasons in losses

Optional activities die. CI has to prove it is changing outcomes.

Frequently Asked Questions

What’s the difference between a competitive intelligence framework and a competitive landscape framework?

A competitive intelligence framework defines the full operating loop: what to track, how to analyze it, and how insights get used. A competitive landscape framework is one output of that system. It visually maps players and segments, but on its own, it doesn’t drive decisions.

How often should a competitive intelligence strategy be updated?

Your competitive intelligence strategy should be reviewed at least quarterly. Not because the framework changes, but because competitors do. Pricing moves, new entrants, and GTM shifts can invalidate assumptions faster than annual planning cycles allow.

What’s the biggest mistake teams make in competitor intelligence gathering?

Most teams collect too much and validate too little. Competitor intelligence gathering fails when single anecdotes get treated as truth. High-quality CI triangulates signals across multiple sources and labels confidence clearly, so leaders know what to act on and what to watch.

Can small teams realistically run a competitive assessment framework?

Yes. A competitive assessment framework scales down well when it’s focused. You only need Tier 1 competitors, a small set of decision-relevant criteria, and a quarterly refresh. CI breaks when teams try to be comprehensive instead of useful.

Conclusion

Competitive intelligence only matters if it changes how strategy gets set.

A strong competitive intelligence framework does exactly that. It forces clarity on who actually threatens you, why deals are won or lost, and where the market is tightening or opening up.

When CI is defined properly, gathered with discipline, analyzed with the right lenses, and tied back to real decisions, it stops being background noise and starts shaping pricing, positioning, planning, and forecasts.

The teams that get this right do not guess less.

They decide faster, with fewer blind spots and far less internal debate.

If you want to see how this kind of strategic rigor comes together, start a free trial to explore how EnableU’s Sales Excellence Framework and its eight standards help teams design competitive strategy that holds up before execution ever begins.

Leave a Reply